Applying Photography, Video, 3D and other Expertise to Forensic Analysis

,

Laurence R. Penn, 3D Animations/Technical Assistant ::::

At DJS Associates, we are often called upon to analyze surveillance videos to make a region of interest easier to identify or to re-create the recorded scenario entirely. What may seem like a simple task actually relies on thorough review and consideration of many factors within the footage. Often these factors are subtle and only an experienced technician can identify the clues provided in the images.

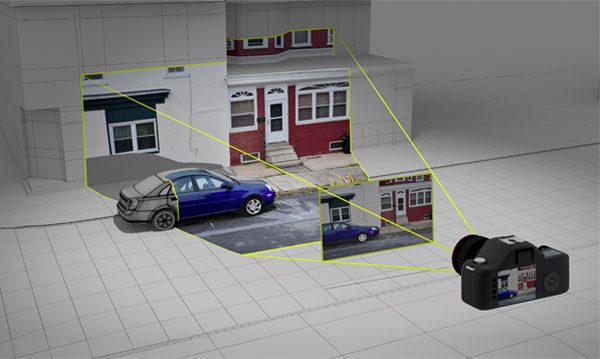

With 3D camera matching, evidence and surveillance imagery can be digitally processed and spatially analyzed to reconstruct a scene.

What is 3D camera matching?

3D camera matching is a process in an application where a photo or video is analyzed to determine the camera’s properties, including the lens and position/rotation in space so that it can be virtually re-created in 3D software. Once the camera’s properties and orientation have been solved, the spatial relationships of features present in the photo or video can then be further analyzed.

How is 3D camera matching performed?

In order to recreate a virtual camera based on photo or video evidence, scale and distance data of some of the photographed subject’s features are required. One common method for DJS to capture this data is through 3D LIDAR laser scans of a site or object. In software, multiple “trackers” are placed on various features in the 2D photograph. These same trackers are then placed in 3D space according to the 3D scan data. Once multiple trackers have been identified and matched in the 2D image and the 3D data, software can mathematically position the camera in space and determine the dimensions of the lens.

How can 3D camera matching be used in your case?

3D camera matched evidence or surveillance imagery can be beneficial to your case in many ways. It can determine spatial and temporal circumstances present at the time the images were captured and also help to create visuals for 3D animations. For example, if a couple vehicles are involved in a collision and have been photographed, the images can be analyzed to determine the position of the vehicles relative to their environment and each other. Any skid marks present in the photos can be measured in 3D space. Once evidence has been placed in 3D space according to the image analysis, it can then be viewed at any angle. If the imagery happens to be video, the speed and path of the traveling vehicle can also be determined and re-created in 3D space.

Once a video or a photo sequence has been painstakingly analyzed and the results optimized and imported into a 3D program, the same images can then be projected through the virtual camera onto 3D geometry to realistically and accurately texture surfaces. In a real-world example, imagine projecting a photo of a lavish room with wood floors, intricate wallpaper and framed art into a blank room that only had white floors and walls; one could be tricked into thinking the projected image was in reality how the room is decorated. Specialized photogrammetry and videogrammetry analysis tools for this purpose are a great supplemental weapon when used in conjunction with 3D scan data.

Having coded a range of interactive projects using multiple programming languages, I am able to apply my skills to improve 3D workflows and use software to its utmost capacity whether it be in Command Line or through APIs (Application Programming Interface) and SDKs (Software Development Kit). Programming with 3D game engines allows us to incorporate interactive features into DJS animations. Viewers can also actively participate in 360 degree virtual reality animations with freedom to look around a scene as it unfolds.

No case is the same, understanding what it takes to analyze video footage and photos to produce accurate 3D animations is invaluable. I’d love to talk to you about how I can help in your case, feel free to contact me with any questions.

Laurence R. Penn is a 3D Animator with DJS Associates and can be reached via email at experts@forensicDJS.com or via phone at 215-659-2010.

Tags: 3D Animation | 3D Camera Matching | 3D Modeler | Engineering Animations | Laurence R. Penn