Videogrammetry: Extracting Temporal Spatial Data for 3D Reconstruction

Laurence R. Penn, CFVT, Senior Forensic Animation/Video Specialist

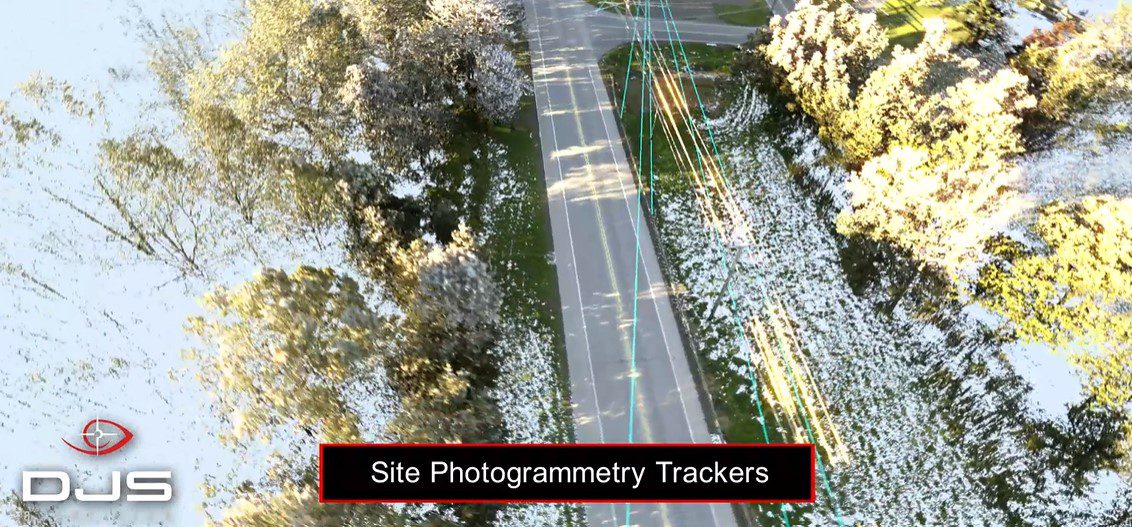

To establish the position of vehicles in space and time, two-dimensional features captured in surveillance video are reprojected to their three-dimensional locations using high density laser scan data. While surveillance cameras are typically locked in position and rotation, a single frame can be used to calculate the camera’s position relative to the to-scale laser scan data of the environment it recorded.

Accurate photogrammetry requires rectilinear images, so part of the analysis process is to remove or correct any lens distortion present in the video. Lens distortion is common with surveillance cameras and results from spatially compressing a wide field of view to the constraints of standard video resolutions. With the lens distortion corrected and the camera position calculated, features on the vehicles are tracked in two-dimensions for multiple frames and correlated, to-scale, to their three-dimensional positions using laser scan data of the vehicles.

The inset video demonstrates the steps in three-dimensional accident reconstruction analysis resulting in highly accurate and measurable results. View additional perspectives of this incident in the Bus v. Motorcycle: Collision Synchronized Video Demonstrative.

Categories: Collision Reconstruction | Engineering Animation | Laurence R. Penn | Surveillance Video